Migrating from TimescaleDB to Arc: Your Hypertables Deserve Better

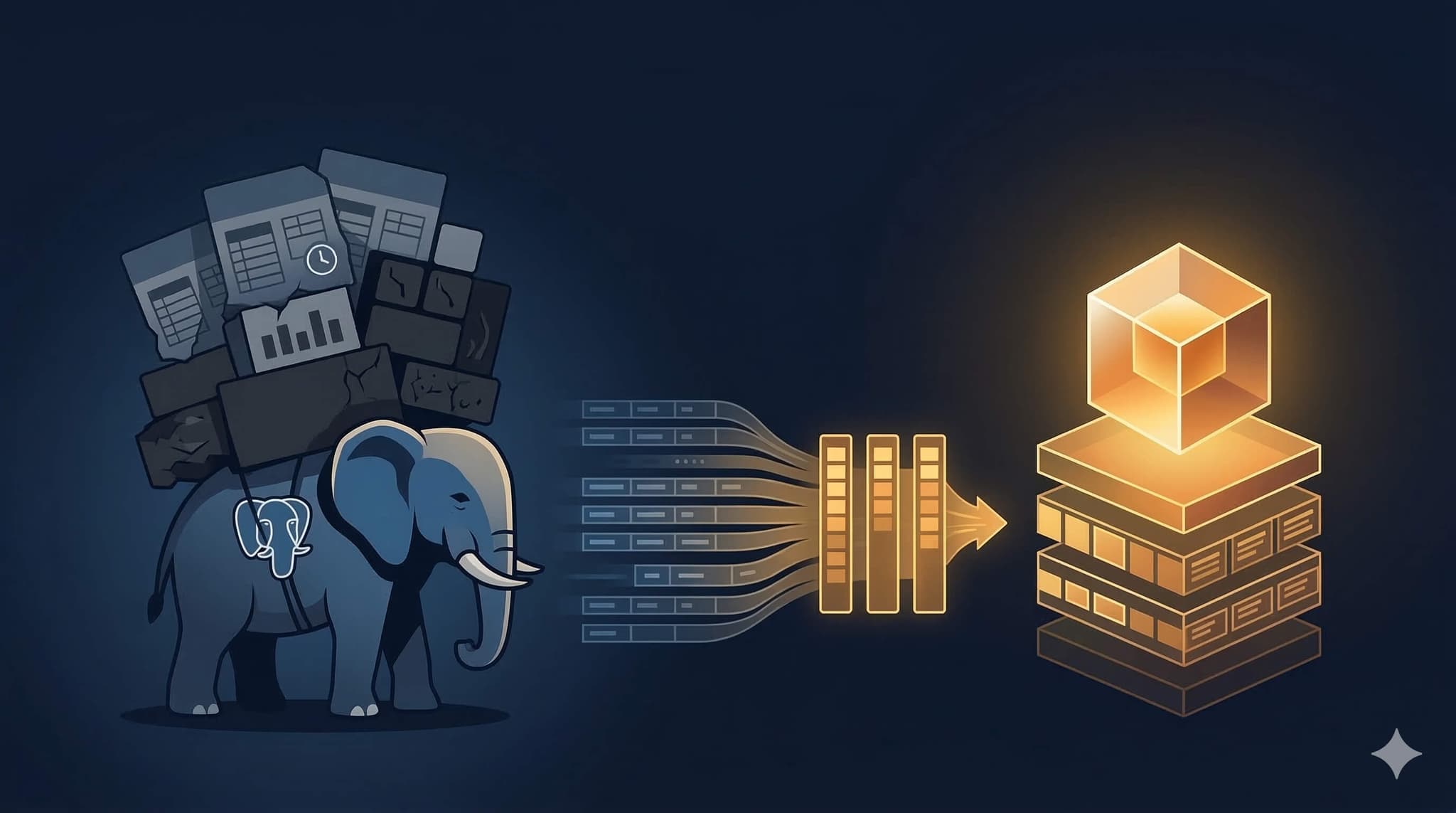

I have a lot of respect for TimescaleDB. Taking PostgreSQL — the most trusted database in the world — and bolting on time-series capabilities was a smart move. Hypertables, columnar compression, continuous aggregates — they built real features on top of a real foundation.

But here's the thing: it's still PostgreSQL underneath. Every write goes through the heap, the WAL, the chunk management layer. Every query goes through the PostgreSQL planner. And when you're ingesting millions of records per second, that foundation becomes a ceiling.

We benchmarked Arc against TimescaleDB on ClickBench last week. 4.6x faster on combined score. 6.5x faster on hot runs. 9.6x faster on ingestion. Same hardware.

If those numbers caught your attention and you're wondering what a migration looks like — it's a \COPY and a curl. This tutorial walks through the whole thing.

The Migration Strategy

Same approach as any production migration: don't flip a switch. Run both systems in parallel.

- Start writing to Arc now — point Telegraf at Arc using our dedicated output plugin. Keep writing to TimescaleDB too. Telegraf supports multiple outputs — use both.

- Migrate historical data — export hypertables as CSV, import into Arc via the CSV endpoint. Do this at your own pace. One table at a time, one night at a time.

- Verify — compare row counts, time ranges, query results between both systems.

- Cut over — once you trust Arc, remove the TimescaleDB pipeline. Swap the Grafana datasource. Done.

TimescaleDB stays live the entire time. If something doesn't look right, you haven't lost anything.

Before You Start

You'll need:

- A TimescaleDB instance with data you want to move

- Arc running (v26.02.2 or later — that's when the CSV import endpoint shipped)

psqlandcurl

Start Arc if you haven't:

docker run -d -p 8000:8000 \

-e STORAGE_BACKEND=local \

-v arc-data:/app/data \

ghcr.io/basekick-labs/arc:latestNo PostgreSQL to configure. No extensions to install. No shared_buffers to tune.

Step 1: Export from TimescaleDB

TimescaleDB is PostgreSQL, so you export with \COPY. One hypertable at a time:

psql -h localhost -U postgres -d mydb -c \

"\COPY (SELECT * FROM cpu ORDER BY time) TO 'cpu.csv' WITH CSV HEADER"Do this for each hypertable you want to migrate:

psql -h localhost -U postgres -d mydb -c \

"\COPY (SELECT * FROM memory ORDER BY time) TO 'memory.csv' WITH CSV HEADER"

psql -h localhost -U postgres -d mydb -c \

"\COPY (SELECT * FROM disk ORDER BY time) TO 'disk.csv' WITH CSV HEADER"Export a Specific Time Range

Don't need the full history? Filter it:

psql -h localhost -U postgres -d mydb -c \

"\COPY (SELECT * FROM cpu WHERE time > '2025-01-01' ORDER BY time) TO 'cpu_2025.csv' WITH CSV HEADER"Compress for Faster Transfers

Arc auto-detects gzip. No flags needed:

gzip cpu.csvHow Big Are My Hypertables?

Before you start exporting, check what you're dealing with:

SELECT hypertable_name,

num_chunks,

pg_size_pretty(hypertable_size(format('%I.%I', hypertable_schema, hypertable_name)::regclass)) as size

FROM timescaledb_information.hypertables;This tells you how many chunks each hypertable has and how much disk space it uses. Plan your export accordingly.

Step 2: Import into Arc

One command per table:

curl -X POST "http://localhost:8000/api/v1/import/csv?measurement=cpu&time_column=time&time_format=epoch_s" \

-H "Authorization: Bearer $ARC_TOKEN" \

-H "X-Arc-Database: mydb" \

-F "file=@cpu.csv"Or with a gzipped file:

curl -X POST "http://localhost:8000/api/v1/import/csv?measurement=cpu&time_column=time&time_format=epoch_s" \

-H "Authorization: Bearer $ARC_TOKEN" \

-H "X-Arc-Database: mydb" \

-F "file=@cpu.csv.gz"Each hypertable becomes a measurement (table) in Arc. The CSV headers become columns. Arc handles the schema automatically.

Time Format Options

TimescaleDB timestamps can be in different formats depending on your schema. Tell Arc what to expect:

| Parameter | Format |

|---|---|

epoch_s | Unix timestamp in seconds |

epoch_ms | Unix timestamp in milliseconds |

epoch_us | Unix timestamp in microseconds |

epoch_ns | Unix timestamp in nanoseconds |

If your TimescaleDB column is a TIMESTAMPTZ and exports as something like 2025-06-15 14:30:00+00, Arc's auto-detection should handle it. If not, convert to epoch in your export query:

psql -h localhost -U postgres -d mydb -c \

"\COPY (SELECT EXTRACT(EPOCH FROM time)::bigint AS time, host, usage_idle, usage_system FROM cpu ORDER BY time) TO 'cpu.csv' WITH CSV HEADER"Batch It

Got a lot of hypertables? Script it:

for table in cpu memory disk network; do

echo "Exporting $table..."

psql -h localhost -U postgres -d mydb -c \

"\COPY (SELECT * FROM $table ORDER BY time) TO '${table}.csv' WITH CSV HEADER"

echo "Importing $table into Arc..."

curl -X POST "http://localhost:8000/api/v1/import/csv?measurement=${table}&time_column=time&time_format=epoch_s" \

-H "Authorization: Bearer $ARC_TOKEN" \

-H "X-Arc-Database: mydb" \

-F "file=@${table}.csv"

doneExport, import, next table. Let it run overnight if you need to.

Prefer Python?

If you'd rather script the migration in Python — or if you need to transform data on the way in — the Arc Python SDK lets you read from TimescaleDB with psycopg2 or SQLAlchemy, transform with pandas or polars, and write directly to Arc at millions of records per second via MessagePack. No CSV files, no curl.

Step 3: Verify

Check that everything landed:

curl -X POST "http://localhost:8000/api/v1/query" \

-H "Authorization: Bearer $ARC_TOKEN" \

-H "Content-Type: application/json" \

-d '{

"database": "mydb",

"sql": "SELECT COUNT(*) as total_rows, MIN(time) as earliest, MAX(time) as latest FROM cpu"

}'Compare with your TimescaleDB source:

SELECT COUNT(*), MIN(time), MAX(time) FROM cpu;Numbers match? Time range looks right? Historical data is done.

Step 4: Point Telegraf at Arc

If you followed the migration strategy, you've been dual-writing. Now drop the TimescaleDB output.

Arc has a dedicated Telegraf output plugin — [[outputs.arc]] — available since Telegraf 1.33. MessagePack columnar format with gzip compression.

During migration — write to both:

# Keep TimescaleDB while migrating historical data

[[outputs.postgresql]]

connection = "host=localhost user=postgres dbname=mydb sslmode=disable"

# New data goes to Arc too

[[outputs.arc]]

url = "http://arc:8000/api/v1/write/msgpack"

api_key = "$ARC_TOKEN"

content_encoding = "gzip"

database = "mydb"After migration — Arc only:

[[outputs.arc]]

url = "http://arc:8000/api/v1/write/msgpack"

api_key = "$ARC_TOKEN"

content_encoding = "gzip"

database = "mydb"Four lines. No connection strings, no SSL modes, no database users.

See our Telegraf output plugin tutorial for the full setup.

Step 5: Translate Your Queries

Here's the good news: TimescaleDB is SQL. Arc is SQL. DuckDB SQL is PostgreSQL-compatible. Most of your queries work with minimal changes — or no changes at all.

What Just Works

These TimescaleDB patterns port directly to Arc:

-- This works in both TimescaleDB and Arc

SELECT

time_bucket(INTERVAL '5 minutes', time) AS bucket,

host,

AVG(usage_idle) AS avg_idle

FROM cpu

WHERE time > NOW() - INTERVAL '1 hour'

GROUP BY bucket, host

ORDER BY buckettime_bucket() is available in DuckDB SQL. Same syntax, same behavior. If your dashboards are built on time_bucket(), they just work.

Standard aggregations, WHERE clauses, GROUP BY, ORDER BY, LIMIT, window functions, CTEs, subqueries, CASE expressions — all the same.

What Needs Minor Changes

first() and last() (TimescaleDB extensions):

-- TimescaleDB

SELECT device, last(value, time) FROM sensors GROUP BY device

-- Arc (DuckDB SQL)

SELECT DISTINCT ON (device)

device,

value,

time

FROM sensors

ORDER BY device, time DESCContinuous aggregates → Continuous queries:

TimescaleDB continuous aggregates are materialized views that auto-refresh. Arc has continuous queries that do the same thing — run on a schedule, materialize results into a target table.

The SQL inside is nearly identical. The wrapper changes from CREATE MATERIALIZED VIEW ... WITH (timescaledb.continuous) to Arc's continuous query API.

Hypertable-specific functions:

| TimescaleDB | Arc equivalent |

|---|---|

time_bucket() | time_bucket() (same) |

first(value, time) | DISTINCT ON + ORDER BY |

last(value, time) | DISTINCT ON + ORDER BY DESC |

time_bucket_gapfill() | time_bucket() + generate series |

interpolate() | Window functions |

hypertable_size() | Not needed — check Parquet files directly |

chunks_detailed_size() | Not needed — standard file system |

The bottom line: if your SQL is standard PostgreSQL, it works. If it uses TimescaleDB-specific functions, there's a DuckDB SQL equivalent.

Percentiles

-- TimescaleDB

SELECT percentile_cont(0.95) WITHIN GROUP (ORDER BY response_time) FROM requests

-- Arc (same syntax!)

SELECT percentile_cont(0.95) WITHIN GROUP (ORDER BY response_time) FROM requestsYeah. Same thing.

Step 6: Switch Grafana

Arc has its own Grafana datasource plugin — https://github.com/Basekick-Labs/grafana-arc-datasource. If you're coming from the PostgreSQL datasource in Grafana (which is how most people connect to TimescaleDB), the switch is straightforward: install the Arc plugin, point it at Arc, and your SQL panel queries carry over with minimal changes.

We recently shipped v1.1.0 with 850x data reduction and query splitting. See the Grafana integration tutorial for the full setup.

What You Get After Migration

| TimescaleDB | Arc | |

|---|---|---|

| Storage | PostgreSQL heap + WAL | Parquet files |

| Compression | TimescaleDB columnstore (~2x) | Parquet columnar (3-5x) |

| Query engine | PostgreSQL planner | DuckDB (analytical-optimized) |

| SQL | PostgreSQL SQL | DuckDB SQL (PostgreSQL-compatible) |

| Partitioning | Hypertables (automatic chunks) | Time-based Parquet partitions |

| Data portability | pg_dump | Direct Parquet file access |

| Operational overhead | PostgreSQL + extension | Single binary |

Your data moves from PostgreSQL heap storage to portable Parquet files. Query them with Arc, DuckDB, Spark, Polars, or anything that reads Parquet. No more pg_dump as your only export path.

And the performance difference isn't subtle. On the same hardware, Arc is 4.6x faster on ClickBench combined score and 9.6x faster on ingestion.

The Checklist

- Dual-write — Add

[[outputs.arc]]to Telegraf alongside TimescaleDB - Export —

\COPYhypertables as CSV files - Import —

curl -X POSTto Arc's/api/v1/import/csv - Verify — Row counts and time ranges match across both systems

- Queries — Most SQL ports directly, translate TimescaleDB-specific functions

- Grafana — Install Arc datasource, update panel queries

- Cut over — Remove TimescaleDB output from Telegraf

- Done — Shut down TimescaleDB when you're confident

The Honest Bit

TimescaleDB is a great product for teams that need PostgreSQL compatibility with transactional guarantees and row-level UPDATEs. If your workload depends on those, keep using it.

But if you're running append-heavy analytical workloads — ingestion pipelines, observability, IoT telemetry, product analytics — you're paying the PostgreSQL tax on every write and every query. Arc was built for exactly these workloads, and the numbers back it up.

Need Help?

Most migrations are a few \COPY commands and a config change. If you hit something unusual — complex continuous aggregates, custom compression policies, or multi-node setups — we'll help.

- Discord: discord.gg/nxnWfUxsdm

- GitHub: https://github.com/Basekick-Labs/arc

- Docs: CSV Import endpoint

- Email: enterprise@basekick.net

Questions about the migration? Open an issue on GitHub or find us on Discord. We've done this before.

Ready to handle billion-record workloads?

Deploy Arc in minutes. Own your data in Parquet. Use for analytics, observability, AI, IoT, or data warehousing.